Difference between revisions of "Project Week 25/Segmentation for improving image registration of preoperative MRI with intraoperative ultrasound images for neuro-navigation"

| Line 5: | Line 5: | ||

*[http://www.mic.uni-bremen.de/jennifer-nitsch/ Jennifer Nitsch] (University of Bremen, Germany) | *[http://www.mic.uni-bremen.de/jennifer-nitsch/ Jennifer Nitsch] (University of Bremen, Germany) | ||

*[http://www.mic.uni-bremen.de/cmt-management-team/scheherazade-kras/ Scheherazade Kraß] (University of Bremen, Germany) | *[http://www.mic.uni-bremen.de/cmt-management-team/scheherazade-kras/ Scheherazade Kraß] (University of Bremen, Germany) | ||

| − | *[http://campar.in.tum.de/Main/JuliaRackerseder Rackerseder | + | *[http://campar.in.tum.de/Main/JuliaRackerseder Julia Rackerseder] (Technical University of Munich, Germany) |

==Project Description== | ==Project Description== | ||

| Line 19: | Line 19: | ||

*The next step would be to analyze the improvement of registration quality with different segmentations/generated landmarks in order to adapt the segmentation algorithms. | *The next step would be to analyze the improvement of registration quality with different segmentations/generated landmarks in order to adapt the segmentation algorithms. | ||

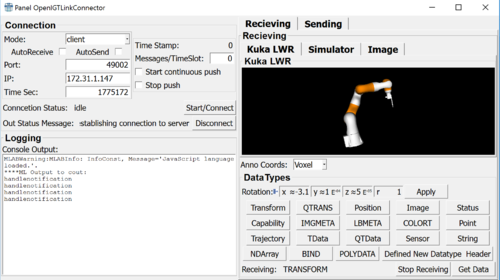

| − | *Using advanced segmentation and registration features for iterative robot control. The respective status of the Kuka LWR iiwa (position, configuration) is simulated and visualized as 3-D model in MeVisLab. Medical data sets | + | *Using advanced segmentation and registration features for iterative robot control. The respective status of the Kuka LWR iiwa (position, configuration) is simulated and visualized as 3-D model in MeVisLab. Medical data sets including target pose (position and path towards it) can be send and received via the OpenIGTLink network protocol as well. |

| | | | ||

Revision as of 15:18, 26 June 2017

Home < Project Week 25 < Segmentation for improving image registration of preoperative MRI with intraoperative ultrasound images for neuro-navigationBack to Projects List

Key Investigators

- Jennifer Nitsch (University of Bremen, Germany)

- Scheherazade Kraß (University of Bremen, Germany)

- Julia Rackerseder (Technical University of Munich, Germany)

Project Description

| Objective | Approach and Plan | Progress and Next Steps |

|---|---|---|

|

it would be to discuss in which "form" one or multiple segmented structures should influence the registration result. |

Illustrations

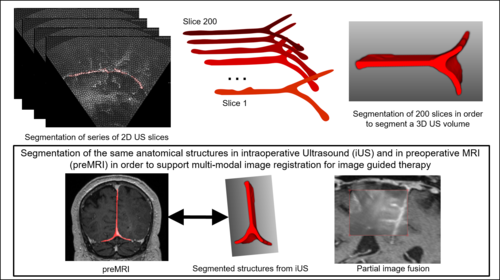

Using segmented structures as guiding frame for multi-modal image registration:

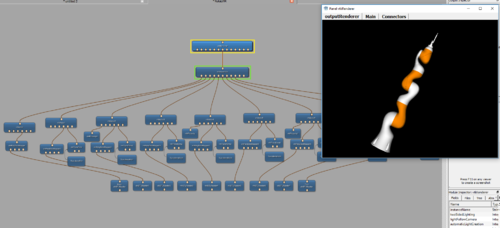

LWR Robot simulation in MeVisLab:

Background and References

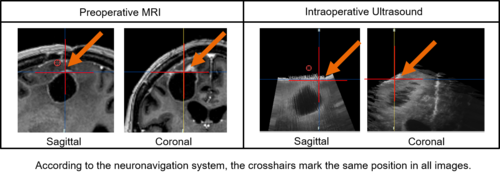

In glioma surgery neuronavigation systems assist in determining the tumor's location and estimating its extent. However, the intraoperative situation diverges seriously from the preoperative situation in the MRI scan displayed on the navigation system. The movement of brain tissue during surgery, i.e., caused by brainshift and tissue removal, must be considered mentally by the surgeon. A task that gets more challenging in later phases of the tumor resection.

Besides, it is an exhaustive issue and the shift of cerebral structures must be expected being non-uniform and that it implies a deformation of the image data. This makes it especially hard to mentally predict and model.

Thus, intraoperative imaging modalities are used to visualize the current intraoperative situation. IUS, for instance, is easy to use intraoperatively, offers real-time information, is widely available at low cost and causes no radiation. These are important advantages when iUS is compared with iCT or iMRI. However, in image-guided surgery precise image registration of iUS and preMRI and the thereon-based image fusion is still an unsolved problem. The different representations of cerebral structures in both modalities as well as artifacts within the iUS, hinder direct fusion of both modalities.