Slicer3:Remote Data Handling

Contents

- 1 Current status of Slicer's (local) data handling

- 2 Goal for how Slicer would upload/download from remote data stores

- 3 TWO Use CASES can drive a first pass implementation

- 4 Slicer-side things to implement

Current status of Slicer's (local) data handling

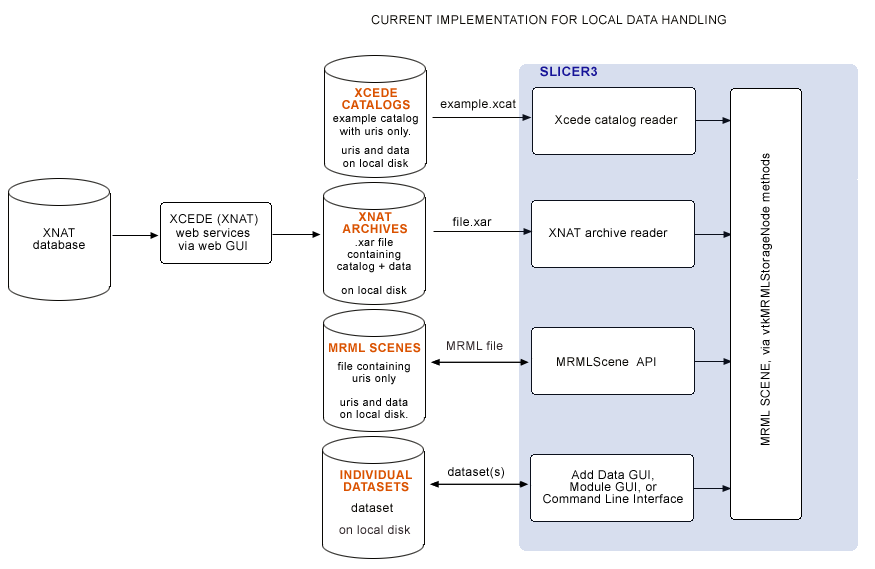

Currently, MRML files, XCEDE catalog files, XNAT archives and individual datasets are all loaded from local disk. All remote datasets are downloaded (via web interface or command line) outside of Slicer. In the BIRN 2007 AHM we demonstrated downloading .xar files from a remote database, and loading .xar and .xcat files into Slicer from local disk using Slicer's XNAT archive reader and XCEDE2.0 catalog reader. Slicer's current scheme for data handling is shown below:

Goal for how Slicer would upload/download from remote data stores

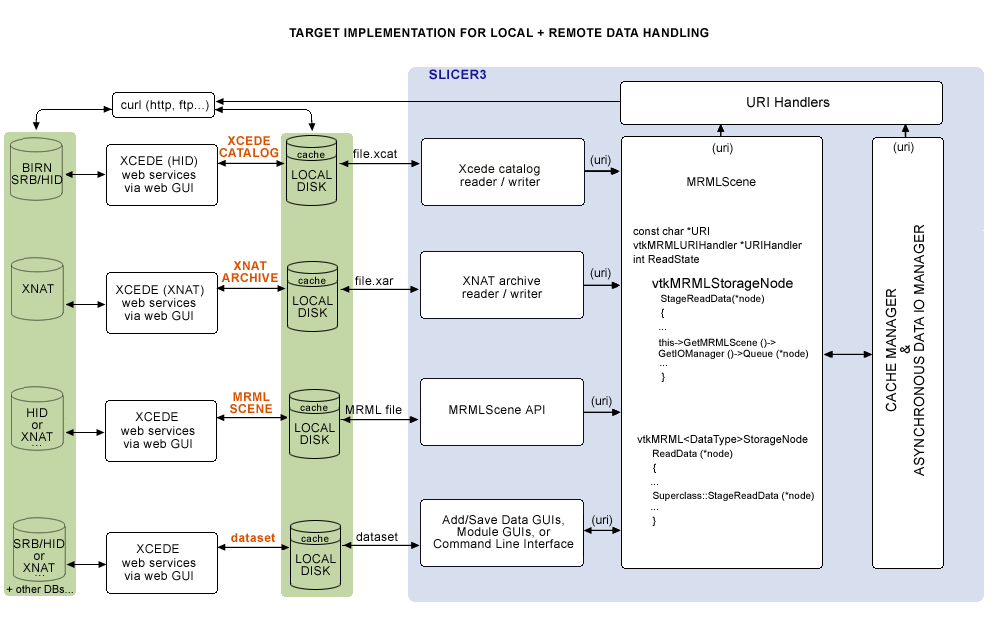

Eventually, we would like to download uris remotely or locally from the Application itself, and have the option of uplaoding to remote stores as well. A draft sketch is below (feedback please!), which features a new Remote Reference Handler, owned by the MRMLScene, and an Asynchronous I/O and caching mechanism:

Initial class design that extends MRML and the Slicer3 Application Settings, defines a vtkURIHander (or vtkMRMLURIHandler?), and an interface to Asynchronous IO Manager:

The following new settings will be added to the Application Interface:

Slicer3 Application Interface Settings:

- CacheDirectory

- Enable/Disable asynchronous I/O

- Instance & Register URI Handlers (?)

The following are example URIs that we’d want to handle:

- ftp://user:pw@host:port/path/to/volume.nrrd (read and write)

- http://host/file.utp (read only)

The following new methods (and members) will be defined in the vtkMRMLScene:

vtkMRMLScene members:

- CacheDirectory

- URIHandlerCollection

vtkMRMLScene methods:

- Set/GetCacheDirectory ( *dir )

- Sets and Gets the CacheDirectory member

- RegisterURIHandler ( *vtkMRMLURIHandler )

- Adds a URIHandler to the collection

- GetURIHandlerCollection ()

- returns the URIHandlerCollection”

A new class vtkMRMLURIHandler will be defined in the /Libs/MRML:

vtkMRMLURIHandler members:

- URI

- CacheDir

- LocalFile

- vtkPasswordPrompter *PasswordPrompter

- ErrorString

- FileSize

- vtkMRMLStorageNode *DestinationStorageNode

vtkMRMLURIHandler (virtual) methods:

- Download (filter watcher?)

- Upload (filter watcher?)

- int CanHandleURI()

The vtkMRMLFileHandler, derived from vtkMRMLURIHandler, will be defined in the /Libs/MRML:

vtkMRMLFileHandler methods:

- Download (filter watcher?)

- Upload (filter watcher?)

- int CanHandleURI()

TWO Use CASES can drive a first pass implementation

For BIRN, we'd like to demonstrate two use cases:

- First, is loading a combined FIPS/FreeSurfer analysis, specified in an Xcede catalog (.xcat) file that contains uris pointing to remote datasets, and view this with Slicer's QueryAtlas. (...if we cannot get an .xcat via the HID web GUI, our approach would be to manually upload a test Xcede catalog file and its constituent datasets to some place on the SRB. We'll keep a copy of the catalog file locally, read it and query SRB for each uri in the .xcat file.)

- Second, is running a batch job in Slicer that processes a set of remotely held datasets. Each iteration would take as arguments the XML file parameterizing the EMSegmenter, the uri for the remote dataset, and a uri for storing back the segmentation results.

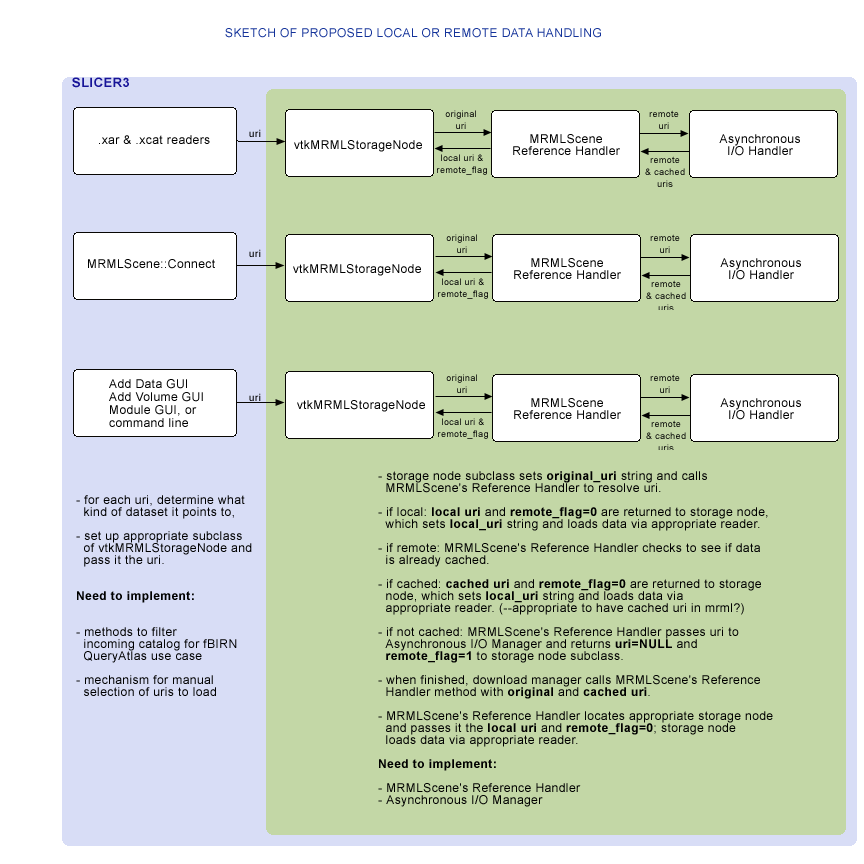

The subset of functionality we'd need is shown below. This is a sketch proposed for discussion and refinement:

Slicer-side things to implement

Proposed initial implementation plan would include

- GUI for selecting which uris in a catalog to open (with some filters, for convenience, for auto-selecting files for Qdec or QueryAtlas),

- the MRMLScene's Reference Handler and vtkMRMLStorageNode methods to interface with it, and

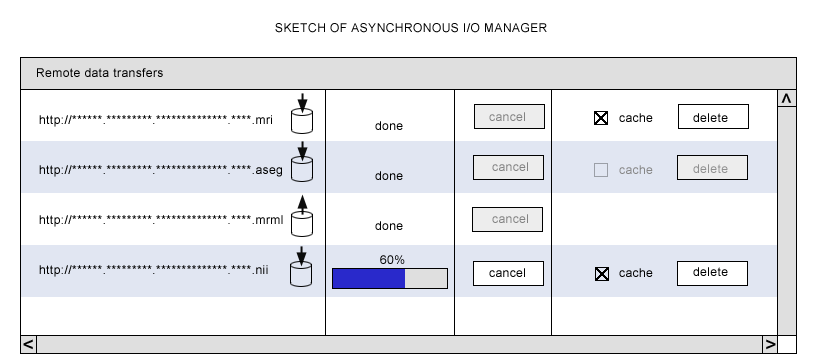

- the Asynchronous I/O and caching mechanism and GUI.

ITK-based mechanism handling remote data (for command line modules, batch processing, and grid processing) (Nicole)

This one is tenatively on hold for now.

Workflows to support

The first goal is to figure out what workflows to support, and a good implementation approach.

Currently, Load Scene, Import Scene, and Add Data options in Slicer all encapsulate two steps:

- locating a dataset, usually accomplished through a file browser, and

- selecting a dataset to initiate loading.

Then MRML files, Xcede catalog files, or individual datasets are loaded from local disk.

For loading remote datasets, the following options are available:

- break these two steps apart explicitly (easiest option),

- bind them together under the hood,

- or support both of these paradigms.

For now, we choose the first option.

Breaking apart "find data" and "load data":

Possible workflow A

- User downloads .xcat or .xml (MRML) file to disk using the HID or an XNAT web interface

- From the Load Scene file browser, user selects the .xcat or .xml archive. If no locally cached versions exist, each remote file listed in the archive is downloaded to /tmp directory (always locally cached) by the Asynchronous I/O Manager, and then cached (local) uri is passed to vtkMRMLStorageNode method when download is complete.

Possible workflow B

- User downloads .xcat or .xml (MRML) file to disk using the HID or an XNAT web interface

- From the Load Scene file browser, user selects the .xcat or .xml archive. If no locally cached versions exist, each remote file in the archive is downloaded to /tmp (only if a flag is set) by the Asynchronous IO Manager, and loaded directly into Slicer via a vtkMRMLStorageNode method when download is complete. (How does load work if we don't save to disk first?)

Workflow C

- describe batch processing example here, which includes saving to local or remote.

In each workflow, the data gets saved to disk first and then loaded into StorageNode or uploaded to remote location from cache.

What data do we need in an .xcat file?

For the fBIRN QueryAtlas use case, we need a combination of FreeSurfer morphology analysis and a FIPS analysis of the same subject. With the combined data in Slicer, we can view activation overlays co-registered to and overlayed onto the high resolution structural MRI using the FIPS analysis, and determine the names of brain regions where activations occur using the co-registered morphology analysis.

The required analyses including all derived data are in two standard directory structures on local disk, and *hopefully* somewhere on the HID within a standard structure (check with Burak). These directory trees contain a LOT of files we don't need... Below are the files we *do* need for fBIRN QueryAtlas use case.

FIPS analysis (.feat) directory and required data

For instance, the FIPS output directory in our example dataset from Doug Greve at MGH is called sirp-hp65-stc-to7-gam.feat. Under this directory, QueryAtlas needs the following datasets:

- sirp-hp65-stc-to7-gam.feat/reg/example_func.nii

- sirp-hp65-stc-to7-gam.feat/reg/freesurfer/anat2exf.register.dat

- sirp-hp65-stc-to7-gam.feat/stats/(all statistics files of interest)

- sirp-hp65-stc-to7-gam.feat/design.gif (this image relates statistics files to experimental conditions)

FreeSurfer analysis directory, and required data

For instance, the FreeSurfer morphology analysis directory in our example dataset from Doug Greve at MGH is called fbph2-000670986943. Under this directory, QueryAtlas needs the following datasets:

- fbph2-000670986943/mri/brain.mgz

- fbph2-000670986943/mri/aparc+aseg.mgz

- fbph2-000670986943/surf/lh.pial

- fbph2-000670986943/surf/rh.pial

- fbph2-000670986943/label/lh.aparc.annot

- fbph2-000670986943/label/rh.aparc.annot

What do we want HID webservices to provide?

- Question: are FIPS and FreeSurfer analyses (including QueryAtlas required files listed above) for subjects available on the HID yet? --Burak says not yet.

- Given that, can we manually upload an example .xcat and the datasets it points to the SRB, and download each dataset from the HID in Slicer, using some helper application (like curl)?

- (Eventually.) The BIRN HID webservices shouldn't really need to know the subset of data that QueryAtlas needs... maybe the web interface can take a BIRN ID and create a FIPS/FreeSurfer xcede catalog with all uris (http://....) in the FIPS and FreeSurfer directories, and package these into an Xcede catalog.

- (Eventually.) The catalog could be requested and downloaded from the HID web GUI, with a name like .xcat or .xcat.gzip or whatever. QueryAtlas could then open this file (or unzip and open) and filter for the relevant uris for an fBIRN or Qdec QueryAtlas session.

- Then, for each uri in a catalog (or .xml MRML file), we'll use (curl?) to download; so we need all datasets to be publicly readable.

- Can we create a directory (even a temporary one) on the SRB/BWH HID for Slicer data uploads?

- We need some kind of upload service, a function call that takes a dataset and a BIRNID and uploads data to appropriate remote directory.

MRMLScene's Reference Handler and StorageNode methods (Nicole) and Asynchronous I/O Manager (Wendy)

vtkMRMLStorageNode superclass needs to have methods which interface to MRMLScene's Reference Handler. Reference Handler needs to interface with Asynchronous I/O and caching mechanism.

- Each subclass of vtkMRMLStorageNode will call the superclass method first.

- Superclass method will call MRMLScene's Reference Handler which evaluates uri (local or remote).

- If local, the Reference Handler will pass the uri back to the storage node.

- If remote, the Reference Handler will check to see if the data is cached on disk (in /tmp or wherever).

- If data is cached, Reference Handler will pass the cached uri back to storge node.

- If data is not cached, Reference Handler method will spawn an independent thread of control that will interact with the Asynchronous I/O Manager, passing it the type of storage node required for the dataset:

- Thread will create a new download entry and observe the cancel button

- it will make whatever call it needs to download (http)

- it will display progress on a progress meter.

- and when complete, it will call a Reference Handler method with cached uri.

Saving Data back to remote site

- Since we have no plan for where to save MRML files on HID, can we have a webservices function we can call from Slicer that writes a file to /dev/null on HID in the meanwhile?